Hard skills include programming languages, version control, software development methodologies, and debugging techniques. Developers must master these to create efficient and reliable applications.

Popular Developer Resume Examples

Check out our top developer resume examples that showcase critical skills such as coding skill, problem-solving abilities, and project management experience. These examples will help you highlight your accomplishments in a way that resonates with potential employers.

Ready to build your ideal resume? Our Resume Builder offers user-friendly templates designed specifically for developers, making it simple to create a standout application.

Recommended

Entry-level developer resume

This entry-level resume for a developer highlights the job seeker's technical skills and achievements, showcasing a solid foundation in software development through relevant projects and certifications. New professionals must demonstrate problem-solving abilities and collaborative experience to appeal to employers, even with limited job history.

Mid-career developer resume

This resume effectively showcases the job seeker's diverse development experience and leadership abilities, indicating readiness for more significant challenges. The focus on mentoring and optimizing projects highlights a proactive approach to professional growth and team enhancement, essential for advanced roles in tech.

Experienced developer resume

This resume highlights the applicant's extensive experience in software development, emphasizing significant achievements such as a 45% improvement in app speed and a 30% reduction in data breaches. The bullet points provide clarity, making it easy to recognize key accomplishments at a glance.

Resume Template—Easy to Copy & Paste

Aya Johnson

Louisville, KY 40205

(555)555-5555

aya@example.com

Professional Summary

Dynamic Developer with 8 years' experience boosting performance. Expert in coding, scalability, and tech solutions with a focus on data-driven apps and efficient deployment.

Work History

Developer

Tech Innovators Inc. - Louisville, KY

April 2023 - April 2026

- Boosted app performance by 30%.

- Developed data-driven features for 5 apps.

- Led team to reduce bugs by 25%.

Software Engineer

Digital Solutions Co. - Louisville, KY

February 2018 - March 2023

- Optimized code efficiency by 20%.

- Created scalable API solutions.

- Implemented CI/CD reducing deploy time.

Programmer Analyst

Innovate Tech Labs - Louisville, KY

April 2016 - January 2018

- Automated 50% of test processes.

- Designed efficient database schemas.

- Streamlined user interface experience.

Skills

- JavaScript

- React.js

- Node.js

- Python

- AWS

- SQL

- Agile Methodologies

- Git

Certifications

- AWS Certified Solutions Architect - Amazon Web Services

- Certified Scrum Master - Scrum Alliance

Education

Master of Science Computer Science

Stanford University Crestwood, KY

May 2016

Bachelor of Science Information Technology

University of Washington Crestwood, KY

May 2014

Languages

- Spanish - C2

- Proficient - French

- B1 - Intermediate

- German - A1

- Beginner

How to Write a Developer Resume Summary

Your resume summary is important because it’s the first thing employers will notice. A well-crafted summary can immediately showcase your skills and set you apart from other applicants.

As a developer, it's best to highlight your technical expertise, problem-solving abilities, and relevant project experience in this section. This is your opportunity to demonstrate how you can contribute to a potential employer’s success.

To guide you in creating an effective summary, consider these developer resume summary examples that illustrate what works well and what doesn’t:

I am a skilled developer with many years of experience in various programming languages. I am seeking a challenging position where I can use my abilities to contribute meaningfully. Ideally, I want to work in an environment that promotes professional growth and learning opportunities. I believe I can be a valuable member of your team if given the chance.

- Lacks specific details about technical skills or projects, making it hard to understand the applicant’s expertise

- Overuses personal pronouns and vague phrases, which dilute the impact of the message

- Emphasizes personal desires rather than showcasing how the applicant can add value to potential employers

Results-driven developer with 7+ years of experience in full-stack development, focusing on scalable web applications and API integrations. Increased application performance by 30% through optimization techniques and improved user satisfaction ratings by 25% via intuitive UI/UX design improvements. Proficient in JavaScript, React, Node.js, and cloud services such as AWS to deliver high-quality software solutions.

- Begins with a clear indication of experience level and specific areas of expertise

- Highlights quantifiable achievements that demonstrate significant contributions to application performance and user satisfaction

- Enumerates relevant technical skills that align well with industry demands and expectations for developers

Pro Tip

Showcasing Your Work Experience

The work experience section is important for your resume as a developer, where you'll present the bulk of your content. Good resume templates always emphasize this important area to help you stand out.

This section should be structured in reverse-chronological order, displaying your previous roles clearly. Use bullet points to highlight key achievements and contributions made during each position.

To illustrate what makes an effective work history section, we’ve prepared a couple of examples. These will show you best practices and common pitfalls to avoid:

Software Developer

Tech Innovations Inc. – San Francisco, CA

- Wrote code for applications.

- Attended meetings and collaborated with team members.

- Fixed bugs and improved software performance.

- Helped with documentation and testing.

- Lacks specific employment dates

- Bullet points are vague and do not highlight unique skills or results

- Emphasizes routine tasks instead of showcasing powerful contributions

Software Developer

Tech Innovations Inc. – San Francisco, CA

March 2020 - Current

- Develop and optimize software applications, improving system performance by 30% through innovative algorithm improvements.

- Lead a team of five developers in agile projects, successfully delivering features ahead of schedule with an average customer satisfaction rating of 95%.

- Mentor junior developers, fostering skill advancement and contributing to a collaborative team environment that encourages knowledge sharing.

- Starts each bullet with strong action verbs that clearly illustrate the job seeker’s contributions

- Incorporates specific metrics to highlight achievements and demonstrate impact on the organization

- Showcases relevant skills such as teamwork and mentorship that are important for a developer role

While your resume summary and work experience are important components, don't overlook the importance of other sections. Each part plays a important role in presenting your qualifications clearly. For more detailed guidance, take a look at our comprehensive guide on how to write a resume.

Top Skills to Include on Your Resume

A well-crafted skills section is vital for an effective resume, as it provides a clear snapshot of your qualifications. This allows job seekers to showcase their relevant abilities while making it easier for employers to evaluate job seeker suitability at a glance.

Hiring managers often prioritize this section to quickly identify if job seekers meet the necessary criteria. Developers should include both technical and interpersonal skills that reflect their expertise in coding and teamwork, which will be discussed further below.

Developers rely on soft skills to foster teamwork, improve problem-solving abilities, and improve communication with colleagues and clients, all contributing to successful project outcomes.

Selecting the right resume skills is important to align with what employers expect from their ideal applicants. Many organizations use automated screening systems that filter out applicants lacking essential skills for the role.

To ensure your resume stands out, carefully review job postings for insights on which specific skills to emphasize. This approach not only attracts recruiters' attention but also improves your chances of passing through ATS filters.

Pro Tip

10 skills that appear on successful developer resumes

To capture the attention of recruiters, it's essential to showcase high-demand skills relevant to developer positions. These skills can make a significant difference in how your resume is perceived. For inspiration on highlighting these competencies, explore our resume examples, which will help you apply with greater confidence.

Consider incorporating the following 10 skills into your resume if they align with your experiences and job requirements:

JavaScript

Problem-solving

Version control (Git)

Agile methodology

Database management

API development

Responsive design

Collaboration tools

Debugging techniques

Front-end frameworks

Based on analysis of 5,000+ information technology (it) professional resumes from 2023-2024

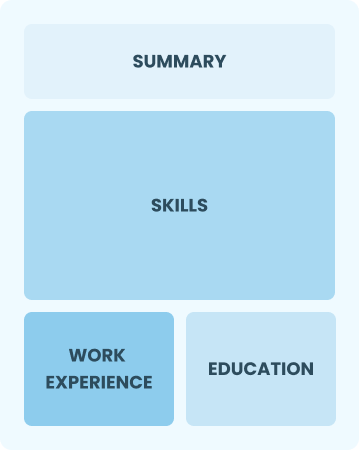

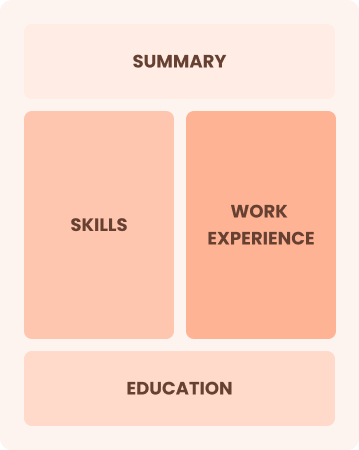

Resume Format Examples

Selecting the appropriate resume format is important for developers, as it emphasizes key technical skills, relevant projects, and career advancement, making your application more appealing to potential employers.

Functional

Focuses on skills rather than previous jobs

Best for:

Best for recent graduates and career changers with up to two years of experience

Combination

Balances skills and work history equally

Best for:

Mid-career developers looking to highlight their skills and growth potential

Chronological

Emphasizes work history in reverse order

Best for:

Leaders driving innovation in advanced software development projects

Developer Salaries in the Highest-Paid States

Our developer salary data is based on figures from the U.S. Bureau of Labor Statistics (BLS), the authoritative source for employment trends and wage information nationwide.

Whether you're entering the workforce or considering a move to a new city or state, this data can help you gauge what fair compensation looks like for developers in your desired area.

Frequently Asked Questions

Should I include a cover letter with my developer resume?

Absolutely. Including a cover letter can significantly improve your application by showcasing your unique qualifications and enthusiasm for the position. It allows you to elaborate on your experience and make a personal connection with hiring managers. For helpful tips, consider exploring our guide on how to write a cover letter or use our Cover Letter Generator to create one quickly.

Can I use a resume if I’m applying internationally, or do I need a CV?

When applying for jobs in many countries, a CV is often more appropriate than a resume. A CV provides a comprehensive view of your academic and professional history. Check out our CV examples to see different formats and styles that meet international standards. Additionally, learn how to write a CV with our guides and templates to craft a well-structured document.

What soft skills are important for developers?

Soft skills, including problem-solving, collaboration, and interpersonal skills, are essential for developers. These abilities improve communication with team members and clients, resulting in more effective project outcomes. By fostering a positive work environment, developers can better navigate challenges and innovate solutions together.

I’m transitioning from another field. How should I highlight my experience?

Highlight transferable skills like problem-solving, collaboration, and adaptability from tech or non-tech roles. These demonstrate your potential and readiness to excel as a developer. Use specific examples to connect these strengths to essential development tasks like debugging code or working in teams. Emphasize how your diverse background enriches your approach to creating innovative solutions.

Where can I find inspiration for writing my cover letter as a developer?

If you're applying for developer positions, exploring cover letter examples can be incredibly helpful. These samples offer insights into content ideas, formatting tips, and methods to highlight your qualifications. Use them as inspiration to create a narrative that showcases your skills and experiences confidently.

Should I use a cover letter template?

Using a cover letter template tailored for developers can improve the structure of your application by ensuring that you highlight key skills such as coding skill, project management experience, and problem-solving abilities. This organization helps hiring managers quickly identify your relevant qualifications.