Hard skills include expertise in data analysis, machine learning techniques, and familiarity with annotation tools that ensure accurate labeling of datasets.

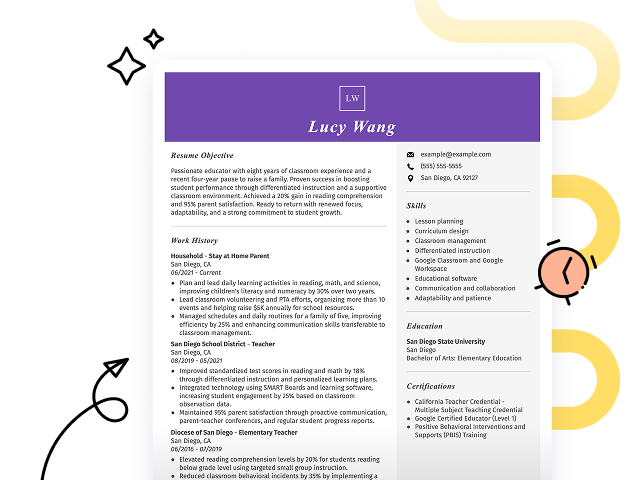

Popular Data Annotation Specialist Resume Examples

Discover our top data annotation specialist resume examples that emphasize critical skills such as attention to detail, data quality assurance, and skill in annotation tools. These Resume Builder examples will help you showcase your accomplishments effectively to potential employers.

Looking to create your ideal resume? Use our Resume Builder with user-friendly templates specifically designed for professionals in data annotation, helping your application stand out.

Recommended

Entry-level data annotation specialist resume

This entry-level resume effectively highlights the job seeker's ability to improve AI model precision through high-volume data annotation and process optimization. New professionals in this field need to demonstrate their skill in data handling and team collaboration to compensate for limited direct industry experience.

Mid-career data annotation specialist resume

This resume effectively showcases the job seeker’s qualifications through quantifiable achievements and a clear progression in data annotation roles. Highlighting efficiency improvements and accuracy demonstrates readiness for leadership positions and complex challenges within the industry.

Experienced data annotation specialist resume

The work history section demonstrates the applicant's strong background as a data annotation specialist, showcasing achievements like boosting annotation accuracy by 20% and streamlining workflows by 15%. The clear bullet-point format improves readability, making it easy for hiring managers to quickly identify key accomplishments.

Resume Template—Easy to Copy & Paste

Jane Brown

Cleveland, OH 44108

(555)555-5555

Jane.Brown@example.com

Professional Summary

Dynamic Data Annotation Specialist with 4 years expertise in enhancing AI models. Proven record in boosting data precision and optimizing project efficiency through advanced labeling strategies.

Work History

Data Annotation Specialist

Insightful AI Solutions - Cleveland, OH

June 2024 - October 2025

- Annotated 10,000+ images monthly for AI models.

- Improved data accuracy by 15% through QC methods.

- Trained 3 new team members on annotation tools.

Machine Learning Labeler

Tech Innovations Co. - Northwood, OH

May 2022 - May 2024

- Tagged 8,000 text entries per month for NLP models.

- Reduced error rates by 12% in labeled datasets.

- Collaborated with team to enhance software tools.

Data Tagging Analyst

Digital Analytics Corp - Cleveland, OH

April 2021 - April 2022

- Managed 6 labeling projects to increase data ROI.

- Achieved 95% data precision via methodical checks.

- Conducted audits for consistent data tagging.

Languages

- Spanish - Beginner (A1)

- French - Beginner (A1)

- Chinese - Beginner (A1)

Skills

- Data Annotation

- Image Labeling

- Natural Language Processing

- Quality Control

- Project Management

- Collaborative Tools

- Accuracy Analysis

- Training & Development

Certifications

- Certified Data Annotation Specialist - AI Guild

- Advanced Machine Learning Labeling - Data Science Academy

Education

March 2021

University of California Berkeley, California

Bachelor of Science Computer Science

University of Washington Seattle, Washington

May 2019

How to Write a Data Annotation Specialist Resume Summary

Your resume summary is the first opportunity to capture an employer’s attention, making it important for standing out in a competitive job market. As a data annotation specialist, your summary should highlight your analytical skills, attention to detail, and experience with various data types.

This role requires you to demonstrate skill in labeling and categorizing data accurately. Including relevant tools or software expertise can also strengthen your profile.

To clarify what makes an effective summary, the examples provided will illustrate successful approaches and common pitfalls. Here are some options to consider:

I am an experienced data annotation specialist looking for a new opportunity. I have worked with various data sets and believe my skills can help your company succeed. I prefer a flexible work environment that values growth and development.

- Uses vague phrases like "experienced" without detailing specific skills or achievements

- Concentrates on personal preferences rather than what value the applicant brings to potential employers

- Lacks compelling language, making it sound generic and unmemorable

Detail-oriented data annotation specialist with over 4 years of experience in machine learning projects, focusing on image and text data classification. Improved annotation accuracy by 20% through rigorous quality assurance protocols and team training initiatives. Proficient in using various annotation tools like Labelbox and RectLabel, as well as collaborating with data scientists to optimize model performance.

- Begins with specific years of experience and highlights a focus area within the field

- Includes quantifiable achievement that showcases measurable impact on project outcomes

- Mentions relevant technical skills and collaborative competencies that are essential for data annotation roles

Pro Tip

Showcasing Your Work Experience

The work experience section is the cornerstone of your resume as a data annotation specialist, where you’ll find the bulk of your content. Good resume templates are designed to emphasize this critical section.

This part should be organized in reverse-chronological order and include bullet points that highlight your key achievements in each previous role. Using this format allows hiring managers to quickly assess your relevant skills and contributions without difficulty.

To better illustrate what makes an effective work history stand out, we’ve prepared a couple of examples for you. These examples clarify what works well and identify pitfalls to avoid.

Data Annotation Specialist

Tech Innovations Inc. – San Francisco, CA

- Annotated data for projects

- Reviewed and corrected data entries

- Collaborated with team members on tasks

- Followed guidelines to ensure accuracy

- Lacks specific details about what types of data were annotated

- Bullet points do not highlight any accomplishments or impact

- Emphasizes routine duties rather than skills or results achieved

Data Annotation Specialist

Tech Innovations LLC – San Francisco, CA

March 2020 - Present

- Analyze and label large datasets with a focus on accuracy, achieving a 98% quality score through careful attention to detail

- Collaborate with data scientists to improve machine learning models, contributing to a 30% increase in model efficiency over six months

- Train new team members on annotation best practices, fostering a culture of continuous improvement and knowledge sharing

- Uses strong action verbs that clearly demonstrate the applicant's contributions

- Incorporates specific metrics to quantify achievements, showcasing the impact of their work

- Highlights relevant skills and teamwork that align with industry standards for data annotation specialists

While your resume summary and work experience often take center stage, don't overlook the other important sections that contribute to a strong application. For additional guidance on perfecting every part of your resume, be sure to explore our comprehensive guide on how to write a resume.

Top Skills to Include on Your Resume

A well-defined technical skills section is important for any resume, as it allows you to quickly demonstrate your qualifications to potential employers. It acts as a snapshot of your capabilities, making it easier for hiring managers to see if you align with their needs.

Be sure to include a mix of hard and soft skills to make your resume stronger. Highlight technical abilities with tools like TensorFlow, Python, and annotation software such as Labelbox or Supervisely, along with analytical and attention-to-detail skills that ensure accurate and efficient data labeling.

Soft skills such as attention to detail, critical thinking, and effective communication are essential for collaborating with teams and maintaining high-quality standards in the data annotation process.

Selecting the right resume skills is important to align with employer expectations and automated screening systems. Many organizations use software to filter out applicants lacking essential qualifications for the position.

To effectively capture a recruiter's attention, review job postings closely. They provide valuable insights into which skills are prioritized, helping your resume stand out in both human and ATS evaluations.

Pro Tip

10 skills that appear on successful data annotation specialist resumes

Highlighting essential skills on your resume can significantly improve your appeal to potential employers in the data annotation field. These sought-after skills are featured in our resume examples, which empower you to apply for jobs with a strong professional edge.

By the way, consider incorporating these key skills into your resume if they align with your background and job specifications:

Attention to detail

Analytical thinking

Skill in annotation tools

Data management

Quality assurance

Time management

Collaboration skills

Technical documentation

Problem-solving abilities

Adaptability

Based on analysis of 5,000+ data systems administration professional resumes from 2023-2024

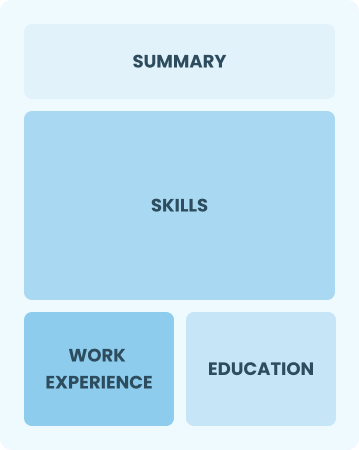

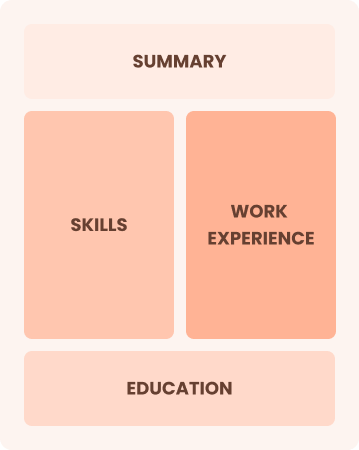

Resume Format Examples

Choosing the right resume format is important for a data annotation specialist. It allows you to clearly showcase your relevant skills and experience, making your career growth stand out to potential employers.

Functional

Focuses on skills rather than previous jobs

Best for:

Recent graduates and career changers with limited experience in data annotation

Combination

Balances skills and work history equally

Best for:

Mid-career professionals focused on demonstrating skills and seeking new opportunities

Chronological

Emphasizes work history in reverse order

Best for:

Experts in data annotation leading complex projects and teams

Frequently Asked Questions

Should I include a cover letter with my data annotation specialist resume?

Absolutely, adding a cover letter can significantly improve your job application by showcasing your enthusiasm and highlighting relevant skills. It's an excellent way to provide additional insights into your qualifications. For guidance on crafting one, explore our step-by-step how to write a cover letter guide or use our Cover Letter Generator for quick assistance.

Can I use a resume if I’m applying internationally, or do I need a CV?

For international job applications, use a CV instead of a resume when the employer specifically requests one or when applying in countries where CVs are the standard. To ensure your application stands out, explore resources like CV examples for guidance on formatting and creation. Additionally, learn more about how to write a CV to make your document compelling.

What soft skills are important for data annotation specialists?

Soft skills such as attention to detail, communication, and interpersonal skills are essential for data annotation specialists. These abilities help facilitate clear discussions with team members and ensure accurate data labeling, ultimately leading to more reliable machine learning models.

I’m transitioning from another field. How should I highlight my experience?

Highlight your transferable skills, such as attention to detail, analytical thinking, and effective communication. These qualities are important for a data annotation specialist and showcase your ability to excel in the role despite limited direct experience. Use specific examples from previous jobs to illustrate how you've successfully tackled challenges that align with data annotation tasks.

How do I write a resume with no experience?

If you're seeking a resume with no experience as a data annotation specialist, highlight relevant projects, coursework, or personal initiatives that showcase your attention to detail and analytical skills. Emphasize your ability to learn quickly and your enthusiasm for contributing to data-driven decisions. Employers value passion and potential just as much as formal experience, so believe in your capabilities.

How do I add my resume to LinkedIn?

To improve your resume's visibility on LinkedIn, add your resume to LinkedIn by uploading it to your profile directly or highlighting key skills and experiences in the "About" and "Experience" sections. This approach helps data annotation specialists stand out, making it easier for recruiters and hiring managers to find qualified job seekers in the field.