Hard skills include expertise in automated testing, test case design, and debugging techniques that are essential for senior test engineers to ensure software quality.

Popular Senior Test Engineer Resume Examples

Discover our top senior test engineer resume examples that emphasize critical skills such as automation testing, quality assurance, and problem-solving. These examples provide effective ways to showcase your accomplishments and expertise to attract potential employers.

Ready to build a standout resume? Our Resume Builder offers user-friendly templates specifically designed for engineering professionals, helping you present your qualifications with confidence.

Recommended

Entry-level senior test engineer resume

This entry-level resume highlights the applicant's technical skills and leadership abilities in software testing, showcasing achievements like significant performance enhancements and successful team management. New professionals must illustrate their practical knowledge and problem-solving capabilities through concrete examples of projects and accomplishments to attract employers, even when work experience is limited.

Mid-career senior test engineer resume

This resume effectively showcases the job seeker's extensive experience and leadership in software testing, illustrating their readiness for advanced roles. The quantifiable achievements highlight a strong track record in improving processes and team performance, signaling significant career progression and expertise.

Experienced senior test engineer resume

The work history section effectively highlights the applicant's extensive experience and significant contributions to software quality assurance. Notable achievements include improving test efficiency by 40% and leading a team that reduced the defect rate by 15% annually, with clear bullet points facilitating quick readability for hiring managers.

Resume Template—Easy to Copy & Paste

Aiko Yamamoto

Westbrook, ME 04092

(555)555-5555

Aiko.Yamamoto@example.com

Professional Summary

Experienced Senior Test Engineer skilled in test automation and QA strategies, enhancing productivity by 25% and reducing defect rates, with certifications in engineering and testing.

Work History

Senior Test Engineer

InnovateTech Solutions - Westbrook, ME

July 2024 - March 2026

- Automated 90% of test cases saving 20 hours weekly

- Improved defect resolution by 30% in 6 months

- Led QA team reducing bug rate by 15%

Test Lead

DataQuest Labs - Portland, ME

May 2023 - June 2024

- Decreased test cycle time by 25%

- Managed test environments for 5 projects

- Enhanced test coverage by integrating tools

Quality Assurance Engineer

ByteTrack Systems - Westbrook, ME

March 2022 - April 2023

- Establish QA frameworks cutting bugs by 40%

- Optimized testing speed saving 10 hours weekly

- Initiated defect triage sessions reducing backlog

Languages

- Spanish - Beginner (A1)

- French - Beginner (A1)

- German - Beginner (A1)

Skills

- Test Automation

- Defect Management

- QA Strategy

- Performance Testing

- Selenium

- Agile Methodologies

- Test Planning

- Regression Testing

Certifications

- Certified Software Test Engineer (CSTE) - International Software Testing Qualifications Board

- ISTQB Advanced Test Automation Engineer - International Software Testing Qualifications Board

Education

Master of Science Computer Science

Stanford University Stanford, California

June 2021

Bachelor of Science Software Engineering

University of California, Berkeley Berkeley, California

June 2019

How to Write a Senior Test Engineer Resume Summary

Your resume summary is the first impression employers have of you, making it essential to convey your qualifications effectively. As a senior test engineer, you should highlight your technical skills, problem-solving abilities, and experience with various testing methodologies. To assist you in crafting an compelling summary, we’ll provide examples that illustrate what works well and what might not resonate:

I am an experienced senior test engineer with a solid background in software testing. I hope to find a job that allows me to use my skills and grow professionally. A collaborative environment where I can make strong contributions is what I'm seeking. I believe I would be a valuable addition to your team if given the chance.

- Contains vague phrases about skills and lacks specific details regarding expertise or achievements

- Relies heavily on personal language, making it less strong and more generic

- Emphasizes the applicant's desires instead of showcasing how they can benefit the employer

Results-driven senior test engineer with 8+ years of experience in software testing and quality assurance. Achieved a 30% reduction in software defects through the implementation of automated testing frameworks, improving overall product quality. Proficient in various testing methodologies including Agile and Waterfall, as well as tools such as Selenium and JIRA to streamline processes and ensure compliance with industry standards.

- Begins with specific experience level and focuses on key engineering expertise

- Includes quantifiable achievement that highlights significant contributions to product quality

- Mentions relevant technical skills that demonstrate capability in modern testing environments

Pro Tip

Showcasing Your Work Experience

The work experience section is the centerpiece of your resume as a senior test engineer, where you’ll provide the most substantial content. Good resume templates are designed to prioritize this section, helping you stand out.

This part should be organized in reverse-chronological order, detailing your previous positions. Use bullet points to highlight your achievements and contributions in each role you've held.

To guide you further, we’ve compiled a couple of examples that demonstrate effective work history entries for senior test engineers. These examples will clarify what makes an entry strong and help you avoid common pitfalls.

Senior Test Engineer

Tech Innovations Inc. – San Francisco, CA

- Performed testing on software products.

- Documented test results and findings.

- Collaborated with the development team.

- Assisted in troubleshooting issues.

- Lacks specific employment dates to show tenure

- Bullet points are overly simplistic and do not highlight achievements

- Emphasizes routine tasks rather than measurable impacts or contributions

Senior Test Engineer

Tech Solutions Inc. – San Francisco, CA

March 2020 - Present

- Develop and execute comprehensive test plans for software applications, improving defect detection rates by 30%.

- Lead a team to implement automated testing frameworks, reducing testing time by 40% while increasing overall product quality.

- Mentor junior engineers in best practices for testing methodologies, fostering a culture of continuous improvement and skill development.

- Starts with strong action verbs that clearly articulate the job seeker’s contributions

- Incorporates quantifiable achievements to illustrate effectiveness and results

- Highlights essential skills relevant to the role while demonstrating leadership and mentorship capabilities

While your resume summary and work experience are critical elements, don’t overlook other sections that also need careful attention. Each part of your resume contributes to the overall impression you make. For detailed guidance, be sure to check out our comprehensive guide on how to write a resume.

Top Skills to Include on Your Resume

Including a skills section on your resume is vital as it provides a clear snapshot of your qualifications. This section not only helps job seekers highlight their strengths but also saves time for employers who are evaluating numerous applications.

For hiring managers, the skills section is an efficient way to gauge whether applicants meet specific criteria and aligns with the role's demands. Senior test engineer professionals should emphasize both technical proficiencies and interpersonal skills, which we will delve into further below.

Soft skills are essential for a senior test engineer because they improve teamwork, communication, and problem-solving abilities, which ensure successful project outcomes and foster a positive work environment.

When selecting skills for your resume, it’s essential to align them with what employers expect from their ideal applicants. Many organizations use automated systems to filter out applicants who lack the necessary resume skills, so matching these expectations is important.

To ensure you highlight the right abilities, take time to review job postings carefully. This will help you identify which resume skills are most sought after by recruiters and likely keywords that ATS scan for during the application process.

Pro Tip

10 skills that appear on successful senior test engineer resumes

Highlighting key skills on your resume can significantly improve your appeal to recruiters seeking senior test engineers. Our resume examples illustrate how these sought-after skills are showcased, empowering you to apply for positions with greater confidence.

Here are 10 essential skills you should consider adding to your resume if they align with your experience and the job's requirements:

Test automation

Scripting languages

Analytical thinking

Attention to detail

Agile methodologies

Defect tracking systems

Collaboration tools

Performance testing

Continuous integration/continuous deployment (CI/CD)

API testing

Based on analysis of 5,000+ computer software professional resumes from 2023-2024

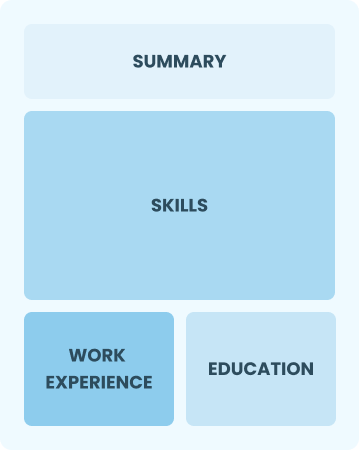

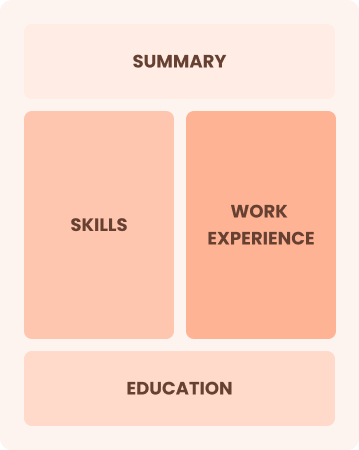

Resume Format Examples

Selecting the right resume format is important for a senior test engineer, as it emphasizes your technical expertise, project experience, and career advancements in a clear and organized manner.

Functional

Focuses on skills rather than previous jobs

Best for:

Best for recent graduates and career changers with limited experience in testing

Combination

Balances skills and work history equally

Best for:

Mid-career professionals focused on demonstrating their skills and pursuing growth opportunities

Chronological

Emphasizes work history in reverse order

Best for:

Seasoned engineers excelling in complex testing frameworks and team leadership

Frequently Asked Questions

Should I include a cover letter with my senior test engineer resume?

Absolutely, adding a cover letter can significantly improve your application by showcasing your personality and clarifying how your skills align with the job. It’s a great opportunity to express your enthusiasm for the position. If you need assistance, check out our easy-to-follow guide on how to write a cover letter or use our Cover Letter Generator for quick results.

Can I use a resume if I’m applying internationally, or do I need a CV?

When applying for jobs outside the U.S., use a CV instead of a resume, as many countries prefer this format. To assist job seekers, we offer comprehensive resources on how to write a CV and provide CV examples to ensure your application meets international standards.

What soft skills are important for senior test engineers?

Soft skills like problem-solving, adaptability, and collaboration are important for senior test engineers. These abilities foster effective communication with team members and stakeholders, ensuring that testing processes run smoothly while also encouraging a culture of continuous improvement and innovation in software quality. Strong interpersonal skills improve these interactions further.

I’m transitioning from another field. How should I highlight my experience?

Highlight your transferable skills like analytical thinking, teamwork, and attention to detail from previous roles. These abilities illustrate your potential value as a senior test engineer, even if you lack direct experience. Use concrete examples to connect your past successes with key testing responsibilities to show how you can excel in this new role.

How do I write a resume with no experience?

A resume with no experience for a senior test engineer position can still stand out. Highlight your academic projects, internships, and relevant coursework. Emphasize skills like attention to detail and problem-solving. Employers appreciate enthusiasm and potential, so showcase your passion for quality assurance to make a great impression.

Should I include a personal mission statement on my senior test engineer resume?

Yes, including a personal mission statement on your resume is recommended. It conveys your core values and career aspirations. This approach works particularly well for organizations that prioritize culture and mission alignment, allowing you to stand out in a competitive job market.