For a test lead, hard skills include expertise in software testing methodologies, skill with testing tools, and the ability to write comprehensive test plans.

Popular Test Lead & Statistics Resume Examples

Check out our top test lead resume examples that showcase key skills like test strategy development, team leadership, and quality assurance. These examples will help you effectively present your accomplishments to potential employers.

Looking to build your ideal resume? Our Resume Builder offers user-friendly templates designed specifically for professionals in the testing field to help you shine.

Recommended

Test lead resume

The resume showcases a streamlined layout and sophisticated resume fonts that significantly improve readability. These design elements convey professionalism, allowing this early-career test lead to present their qualifications clearly and make a compelling impact on potential employers.

QA lead resume

This resume effectively integrates key skills such as automated testing and team leadership with extensive work experience. By showcasing these abilities alongside specific accomplishments, employers can clearly assess the job seeker's strengths in improving software quality and leading effective QA teams.

Test manager resume

This resume uses bullet points effectively to showcase the job seeker's extensive experience, making it easy for hiring managers to identify key achievements at a glance. The clear headings and strategic spacing improve readability, ensuring that important contributions stand out amidst a wealth of information.

Resume Template—Easy to Copy & Paste

David Park

Austin, TX 78702

(555)555-5555

David.Park@example.com

Professional Summary

Dynamic Test Lead with expertise in QA, process improvement, and team leadership, proven by increased efficiency and reduced defects, ready to drive tech innovations.

Work History

Test Lead

InnoTech Solutions - Austin, TX

January 2023 - December 2025

- Led a team of 8 testers improving output by 30%

- Implemented new QA processes, reducing defects by 25%

- Collaborated with dev team for seamless integration

QA Manager

TechWave Innovations - Dallas, TX

January 2021 - December 2022

- Managed testing for software projects increasing efficiency

- Analyzed test data improving validation by 20%

- Trained staff on agile methodologies for faster delivery

Test Engineer

CodeCraft Labs - Houston, TX

January 2020 - December 2020

- Executed test scripts reducing bugs by 15%

- Developed automated tests cutting time by 40%

- Maintained testing environments for QA reliability

Skills

- Quality Assurance

- Test Automation

- Agile Methodologies

- Team Leadership

- Defect Tracking

- Test Case Design

- Performance Testing

- Continuous Integration

Certifications

- Certified Software Tester (CSTE) - QAI Global Institute

- ISTQB Advanced Level Test Manager - ISTQB

Education

Master's Computer Science

Stanford University Stanford, California

June 2019

Bachelor's Information Technology

University of California, Berkeley Berkeley, California

June 2018

Languages

- Spanish - Beginner (A1)

- French - Intermediate (B1)

- German - Beginner (A1)

How to Write a Test Lead Resume Summary

Your resume summary is the first impression hiring managers have of you, making it important to capture their attention. This section should clearly convey your qualifications and what sets you apart in the competitive landscape of test leads.

As a test lead, you need to showcase your leadership abilities, technical expertise, and problem-solving skills. Highlighting your experience with project management and quality assurance will demonstrate your value to potential employers.

To illustrate effective approaches to crafting this essential section, let's explore some summary examples tailored for test leads. These examples will clarify what resonates well and what doesn't:

I am a seasoned test lead with extensive experience in software testing and project management. I hope to find a position where I can use my skills effectively and contribute positively to the team. A role that offers opportunities for professional development and learning is what I seek.

- Contains vague language about experience without providing specific achievements or metrics

- Focuses primarily on personal aspirations rather than highlighting how the job seeker adds value to potential employers

- Lacks strong, compelling verbs and relies on generic terms that fail to convey expertise or unique qualities

Results-driven test lead with over 7 years of experience in software testing and quality assurance. Successfully led a team that reduced defect rates by 30% through the implementation of automated testing frameworks and rigorous QA processes. Proficient in various testing tools such as Selenium, JIRA, and TestRail, with a strong background in Agile methodologies and continuous integration practices.

- Begins with clear experience level and specific role focus

- Highlights quantifiable achievements that showcase the applicant's impact on project quality

- Mentions relevant technical skills that align with industry standards and employer expectations

Pro Tip

There are plenty of tailored resume objective examples available that can guide you in showcasing your potential in your desired field.

Showcasing Your Work Experience

The work experience section is important for your resume as a test lead, where you'll present the bulk of your content. Good resume templates always emphasize this important area.

This section should be organized in reverse-chronological order, detailing your previous roles. Use bullet points to showcase key achievements and contributions made in each position.

To further assist you, we’ve put together a couple of examples that highlight effective work history entries for test leads. These examples will clarify what stands out and what to avoid:

Test Lead

Tech Solutions Inc. – New York, NY

- Led testing efforts for software products.

- Created test plans and executed tests.

- Collaborated with developers to fix issues.

- Reviewed test results and reported findings.

- Lacks specific details about the types of software tested

- Bullet points do not highlight any measurable success or impact

- Focuses on routine tasks rather than showcasing leadership or problem-solving abilities

Test Lead

Tech Innovations Inc. – San Francisco, CA

March 2020 - Present

- Lead a team of 10 QA testers to design and execute comprehensive test plans for software releases, ensuring high-quality deliverables.

- Implemented automation testing processes that reduced testing time by 30%, increasing overall team efficiency.

- Facilitated cross-departmental workshops to improve communication between development and testing teams, resulting in a 40% decrease in defect rates.

- Uses action verbs that highlight leadership and impact on team performance

- Incorporates quantifiable metrics to clearly demonstrate improvements and successes achieved

- Showcases relevant skills in both technical and interpersonal areas essential for the role

While the resume summary and work experience are important components of your resume, don't overlook the significance of other sections that can improve your application. For further insights and detailed guidance, visit our comprehensive guide on how to write a resume.

Top Skills to Include on Your Resume

A skills section is important for any resume because it quickly communicates your key competencies to potential employers. It highlights your qualifications and ensures you stand out in the application process.

Every resume should have a mix of hard skills and soft skills. Including a diverse range of relevant skills will make your application much stronger.

Equally important are soft skills, such as leadership, communication, and problem-solving, which foster team collaboration and ensure project success.

Selecting the right resume skills is important for aligning with what employers expect from applicants. Many organizations use automated systems to filter out applicants lacking essential skills needed for the position.

To improve your chances of getting noticed, take time to examine job postings closely. They often highlight key skills that recruiters and ATS systems prioritize, guiding you on what to feature prominently in your resume.

Pro Tip

10 skills that appear on successful test lead resumes

Highlighting in-demand skills on your resume is essential for attracting the attention of recruiters in test lead positions. You can find resume examples that illustrate how these skills improve resumes, helping you to present yourself as a qualified job seeker with confidence.

By the way, if you identify with any of the skills listed below, consider adding them to your resume to align with your experience and job requirements:

Test planning

Automation testing

Communication

Problem-solving

Attention to detail

Agile methodology

Team leadership

Documentation skills

Performance testing

Defect tracking

Based on analysis of 5,000+ statistics professional resumes from 2023-2024

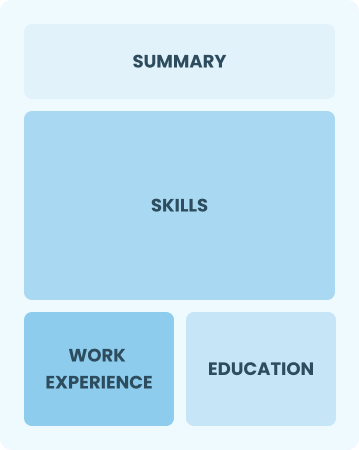

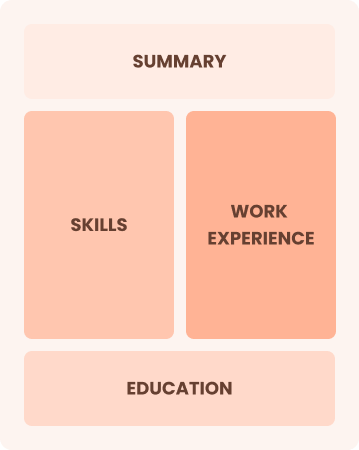

Resume Format Examples

Selecting the right resume format is important as it showcases your most relevant skills, experience, and career growth, ensuring that potential employers quickly recognize your qualifications and achievements.

Functional

Focuses on skills rather than previous jobs

Best for:

Recent graduates and career changers with limited experience.

Combination

Balances skills and work history equally

Best for:

Mid-career professionals focused on demonstrating their skills and potential

Chronological

Emphasizes work history in reverse order

Best for:

Experienced leaders excelling in strategic project management

Frequently Asked Questions

Should I include a cover letter with my test lead resume?

Absolutely. Including a cover letter can greatly improve your application by showcasing your personality and explaining why you're a perfect fit for the job. It gives you a chance to highlight specific experiences that align with the role.

If you need assistance, explore our guide on how to write a cover letter or use our Cover Letter Generator for quick support.

Can I use a resume if I’m applying internationally, or do I need a CV?

When applying for jobs abroad, use a CV instead of a resume. A CV provides a comprehensive overview of your academic and professional background, which is often preferred in many countries. Explore our resources to learn how to write a CV effectively and ensure your application stands out.

Additionally, you can find various CV examples to guide you through the formatting and creation process.

What soft skills are important for test leads?

Soft skills such as communication, leadership, and problem-solving are essential for test leads. These interpersonal skills promote teamwork, improve project management, and ensure challenges are handled efficiently, ultimately driving successful project outcomes.

I’m transitioning from another field. How should I highlight my experience?

Highlight your transferable skills such as communication, teamwork, and analytical thinking from previous roles. These abilities illustrate your potential to excel in a test lead position despite lacking direct experience.

Share concrete examples that connect your past successes to the key responsibilities of this role, showcasing how you can add value to the team.

How should I format a cover letter for a test lead job?

To begin with your contact details and a professional greeting, you should first format a cover letter. Follow this with an engaging introduction that highlights your interest in the test lead position.

Include a summary of your relevant skills and experiences, ensuring you align these with the job requirements. Conclude with a strong closing statement that encourages further communication.

How do I write a resume with no experience?

If you're applying for a test lead position, highlight your relevant coursework, personal projects, and any internships. Showcase your problem-solving abilities, leadership skills, and attention to detail.

Write a resume with no experience and emphasize your enthusiasm for quality assurance and teamwork. Employers value potential, so focus on how you can contribute positively despite limited formal roles.