Technical, measurable abilities like skill in AWS services, data modeling, ETL processes, and SQL programming are considered hard skills that are essential for effective data engineering.

Popular AWS Data Engineer Resume Examples

Check out our top AWS data engineer resume examples that emphasize critical skills such as data modeling, cloud architecture, and ETL processes. These samples will guide you in showcasing your accomplishments effectively to potential employers.

Ready to build an impressive resume? Our Resume Builder offers user-friendly templates specifically designed for tech professionals, helping you shine in the job market.

Recommended

Entry-level AWS data engineer resume

This entry-level resume for an AWS Data Engineer effectively highlights the job seeker's technical skills and relevant project experiences, showcasing their ability to optimize data pipelines and implement efficient cloud solutions. New professionals in this field must demonstrate strong problem-solving abilities and familiarity with data engineering tools, even if their direct work experience is limited.

Mid-career AWS data engineer resume

This resume effectively outlines essential qualifications, showcasing the applicant's extensive experience in AWS and data engineering. The clear presentation of accomplishments and skills illustrates readiness for advanced roles, emphasizing a proven track record of optimizing data systems and driving efficiency.

Experienced AWS data engineer resume

The work history section illustrates the applicant's extensive experience as an AWS Data Engineer, demonstrating their ability to optimize cloud costs by 30% and improve data pipelines by 40%. The clear bullet point format allows hiring managers to quickly grasp key achievements and technical skills.

Resume Template—Easy to Copy & Paste

Sophia Martinez

Northwood, OH 43623

(555)555-5555

Sophia.Martinez@example.com

Professional Summary

AWS Data Engineer with 6 years' experience optimizing cloud solutions. Expert in ETL, cost management, and process automation. Proven track record of enhancing performance by 30%.

Work History

AWS Data Engineer

CloudNova Solutions - Northwood, OH

September 2023 - October 2025

- Optimized AWS infrastructure, cutting costs by 20%

- Developed ETL scripts boosting data processing by 30%

- Managed cloud data solutions for 10+ clients

Cloud Data Analyst

TechVision Analytics - Cleveland, OH

February 2021 - August 2023

- Led project team to deliver insights, increasing ROI by 15%

- Implemented data lakes, enhancing query speed by 25%

- Coordinated with departments improving workflow by 40%

Junior Cloud Architect

DataSphere Innovations - Northwood, OH

January 2019 - January 2021

- Designed scalable AWS solutions for 5 major projects

- Reduced downtime by 35% through system optimization

- Enhanced security protocols decreasing incidents by 50%

Skills

- AWS Cloud Solutions

- Data Analysis

- ETL Development

- Database Management

- Infrastructure Optimization

- Security Protocols

- Cost Management

- Process Automation

Education

Master's Degree Data Science

University of Washington Seattle, WA

June 2018

Bachelor's Degree Computer Science

State University New York, NY

June 2016

Certifications

- AWS Certified Solutions Architect - Amazon Web Services

- Certified Data Engineering Professional - Data Institute

Languages

- Spanish - Beginner (A1)

- French - Beginner (A1)

- Mandarin - Beginner (A1)

How to Write an AWS Data Engineer Resume Summary

Your resume summary is important because it's the first thing employers will read. It sets the tone for your application and can determine whether you stand out or mix in.

As an AWS Data Engineer, emphasize your expertise in cloud solutions, data management, and system optimization. These skills are vital for showcasing your ability to handle complex data infrastructures.

To better understand effective summaries, compare these AWS Data Engineer resume examples. They illustrate what captures attention and what misses the mark:

I am an AWS Data Engineer with extensive experience in data management and cloud technologies. I am seeking a position where I can use my skills to help the company grow and succeed. A job that offers flexibility and advancement opportunities is what I desire. I believe my background will be beneficial to your team.

- Lacks specific details about technical skills or achievements related to AWS or data engineering

- Emphasizes personal desires over contributions to potential employers, which can weaken impact

- Uses generic phrases like 'extensive experience' without providing concrete examples or metrics

Results-driven AWS Data Engineer with over 6 years of experience in designing and implementing scalable data solutions on cloud platforms. Achieved a 30% increase in data processing efficiency by optimizing ETL pipelines and leveraging AWS services such as Lambda and Redshift. Proficient in Python, SQL, and data visualization tools to deliver actionable insights that drive business decisions.

- Starts with a clear indication of experience level and area of expertise in AWS

- Highlights a quantifiable achievement that shows the impact on operational efficiency

- Mentions specific technical skills relevant to data engineering roles, improving job suitability

Pro Tip

Showcasing Your Work Experience

The work experience section is important for your resume as an AWS data engineer and will contain the majority of your content. Good resume templates always emphasize this important section.

This part should be organized in reverse-chronological order, listing your previous roles. Use bullet points to succinctly describe your achievements and contributions in each position.

To illustrate effective practices, we’ll provide a couple of examples that highlight what works well and what should be avoided:

AWS Data Engineer

Tech Innovations Inc. – Seattle, WA

- Managed cloud resources

- Worked on data pipelines

- Collaborated with team members

- Performed routine maintenance tasks

- Lacks specifics about the projects or technologies used

- Bullet points are too generic and do not highlight any significant achievements

- Does not provide insights into the impact of the applicant's contributions

AWS Data Engineer

Tech Solutions Inc. – San Francisco, CA

March 2020 - Present

- Design and implement scalable data pipelines using AWS services, improving data processing speed by 30%

- Optimize existing ETL processes to reduce costs by 20% while maintaining data integrity

- Collaborate with data scientists to develop machine learning models that increased predictive accuracy by 15%

- Starts each bullet point with strong action verbs that highlight the applicant’s contributions

- Incorporates specific metrics to showcase tangible outcomes of the projects undertaken

- Demonstrates relevant skills in cloud computing and collaboration that are critical for the role

While your resume summary and work experience are important, don't overlook the importance of other sections that also deserve attention. For detailed guidance on perfecting every part of your resume, be sure to check out our comprehensive guide on how to write a resume.

Top Skills to Include on Your Resume

A skills section is important for any resume because it allows you to quickly demonstrate your qualifications to potential employers. It provides a snapshot of your capabilities, ensuring that hiring managers see your most relevant strengths at a glance.

Be sure to include a mix of hard and soft skills to make your resume stronger. Highlight technical abilities like AWS, SQL, Python, and data warehousing tools such as Redshift or Snowflake, along with soft skills such as analytical thinking and collaboration that support efficient data management and problem-solving.

Equally important are soft skills, such as problem-solving, teamwork, and effective communication. These skills play a important role in collaborating with cross-functional teams and ensuring project success in a dynamic environment.

When selecting skills for your resume, it's essential to align with what employers expect from job seekers. Many organizations use automated screening tools that filter out applicants who lack the necessary resume skills.

To effectively tailor your application, review job postings closely for insight into the specific skills sought by recruiters. This will help you highlight relevant abilities that can improve your chances of passing through both human and ATS evaluations.

Pro Tip

10 skills that appear on successful AWS data engineer resumes

Improve your resume to attract recruiters by showcasing key skills that are in high demand for AWS data engineer positions. Our resume examples illustrate these essential skills, giving you the confidence to apply effectively.

Here are 10 skills you should consider including in your resume if they align with your experience and job specifications:

Data modeling

ETL processes

AWS services (S3, Redshift)

SQL skill

Python programming

Data warehousing concepts

Big data technologies (Hadoop, Spark)

Cloud architecture design

Problem-solving abilities

Collaboration and teamwork

Based on analysis of 5,000+ data systems administration professional resumes from 2023-2024

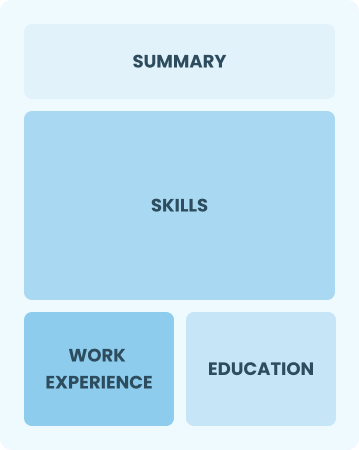

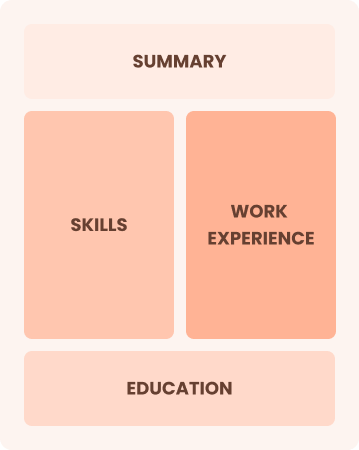

Resume Format Examples

Choosing the right resume format is important for an AWS data engineer, as it effectively showcases your technical skills, relevant experience, and growth in this rapidly evolving field.

Functional

Focuses on skills rather than previous jobs

Best for:

Recent graduates and career changers with up to two years of experience

Combination

Balances skills and work history equally

Best for:

Mid-career professionals eager to demonstrate their skills and pursue new opportunities

Chronological

Emphasizes work history in reverse order

Best for:

Seasoned experts leading innovative data solutions and architectures

Frequently Asked Questions

Should I include a cover letter with my AWS data engineer resume?

Absolutely, including a cover letter can significantly improve your application. It allows you to showcase your personality and clarify how your skills align with the job. If you're looking for guidance on crafting an effective cover letter, consider checking out our comprehensive how to write a cover letter guide or use our Cover Letter Generator for quick assistance.

Can I use a resume if I’m applying internationally, or do I need a CV?

When applying for jobs outside the U.S., use a CV instead of a resume. A CV provides a comprehensive overview of your academic and professional history, which is often required in many countries. For guidance on proper CV formatting, explore our resources on how to write a CV and review various CV examples tailored for international applications.

What soft skills are important for AWS data engineers?

Interpersonal skills, communication, problem-solving, and collaboration are essential for AWS data engineers. These abilities aid in effectively conveying complex technical information to team members and stakeholders, ensuring smoother project execution and fostering a productive work environment.

I’m transitioning from another field. How should I highlight my experience?

Highlight your transferable skills such as analytical thinking, teamwork, and project management when applying for AWS Data Engineer roles. Demonstrating these abilities showcases your potential to adapt and add value even if you have limited direct experience. Share specific examples from past positions where you effectively applied these skills to solve challenges or improve processes in data management.

Where can I find inspiration for writing my cover letter as an AWS data engineer?

If you're pursuing a role as an AWS data engineer, consider reviewing professionally crafted cover letter examples. These samples provide valuable insights into content ideas, formatting tips, and effective ways to showcase your qualifications, making your application stand out in a competitive job market.

How do I add my resume to LinkedIn?

To increase your resume's visibility on LinkedIn, add your resume to LinkedIn directly to your profile and highlight key projects in the "About" and "Experience" sections. This approach helps data engineering recruiters easily find skilled job seekers and understand your expertise in AWS technologies.