Technical abilities, such as data modeling, ETL (Extract, Transform, Load) processes, and skill in Azure services like Data Factory and Databricks are considered hard skills.

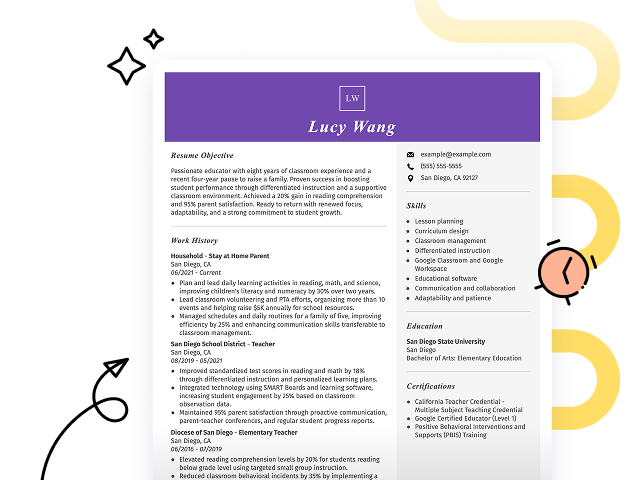

Popular Azure Data Engineer Resume Examples

Check out our top Azure data engineer resume examples that emphasize key skills such as data integration, cloud solutions, and analytics expertise. These examples illustrate how to effectively showcase your accomplishments in this dynamic field.

Ready to build your ideal resume? Our Resume Builder offers user-friendly templates specifically designed for tech professionals, helping you shine in your job applications.

Recommended

Entry-level Azure data engineer resume

This entry-level resume for an Azure Data Engineer effectively showcases the applicant's technical skills and accomplishments in cloud optimization, data analysis, and ETL processes. New professionals in this field must highlight their ability to leverage educational background and relevant projects to demonstrate problem-solving capabilities and technical skill despite limited professional experience.

Mid-career Azure data engineer resume

This resume effectively outlines qualifications by showcasing compelling achievements and technical skills relevant to cloud data engineering. The clear progression from analyst to engineer highlights the job seeker's readiness for advanced challenges and leadership roles in data solutions.

Experienced Azure data engineer resume

The work history section illustrates the applicant's expertise as an Azure data engineer, showcasing significant achievements like optimizing data workflows by 30% and improving data accuracy by 20%. The clear bullet-point format improves readability, making it effective for technical hiring managers seeking precise accomplishments.

Resume Template—Easy to Copy & Paste

Jane Kim

Hillcrest, NY 11510

(555)555-5555

Jane.Kim@example.com

Skills

- Azure Data Services

- SQL & NoSQL Databases

- ETL Processes

- Data Warehousing

- Cloud Architecture

- Big Data Technologies

- Power BI

- Python & R Programming

Languages

- Spanish - Beginner (A1)

- French - Intermediate (B1)

- Chinese - Beginner (A1)

Professional Summary

Accomplished Azure Data Engineer adept in cloud solutions and ETL processes. Proven track record of optimizing data pipelines and enhancing performance. Expert in Azure, Power BI, and Python with a focus on efficiency and cost-saving innovations.

Work History

Azure Data Engineer

TechCloud Innovations - Hillcrest, NY

March 2024 - October 2025

- Built data pipelines improving efficiency by 30%

- Implemented cloud solutions saving 200,000 annually

- Managed cloud migrations enhancing performance by 20%

Cloud Data Specialist

DataCrafters Solutions - Albany, NY

January 2023 - February 2024

- Optimized database reducing query times by 40%

- Designed ETL processes increasing data accuracy by 15%

- Conducted data analytics supporting M in decisions

Data Analyst

Insight Analytics Co. - Hillcrest, NY

February 2021 - December 2022

- Developed reports boosting sales by 25%

- Analyzed datasets increasing insights extraction by 10%

- Streamlined processes saving over 500 work hours

Certifications

- Microsoft Certified: Azure Data Engineer - Microsoft

- Certified Data Management Professional - DASCA

Education

Master of Science Data Science

University of Washington Seattle, WA

June 2021

Bachelor of Science Computer Science

California State University Los Angeles, CA

June 2019

How to Write an Azure Data Engineer Resume Summary

Your resume summary is the first impression you make on hiring managers, so it’s essential to craft it thoughtfully. As an Azure Data Engineer, you should highlight your technical skills, experience with data architecture, and skill in cloud services.

This profession requires a mix of analytical abilities and hands-on expertise in managing data workflows. Focus on showcasing your successes in optimizing data processes and implementing solutions that drive business insights.

To better illustrate what makes an compelling summary, the examples below will guide you through effective strategies and common pitfalls to avoid:

I am an experienced Azure Data Engineer with a broad range of skills and knowledge. I hope to find a position that allows me to use my abilities and be part of a successful team. A company that values innovation and offers room for personal development would be perfect for me. I believe I can contribute positively if given the chance.

- Contains vague descriptions of skills without mentioning specific technologies or achievements

- Relies heavily on personal desires, lacking focus on what the applicant can provide to the employer

- Uses generic phrases that fail to highlight unique strengths or experiences relevant to data engineering

Results-driven Azure Data Engineer with over 6 years of experience in designing and implementing data solutions on Microsoft Azure. Successfully optimized ETL processes, resulting in a 30% reduction in data processing time while increasing data accuracy by 25%. Proficient in Azure Data Factory, SQL Server, and Power BI for creating robust data pipelines and insightful dashboards.

- Begins with a clear indicator of years of experience and specific role expertise

- Highlights quantifiable achievements that reflect significant improvements in efficiency and accuracy

- Mentions relevant technical skills essential for the position, demonstrating immediate value to potential employers

Pro Tip

Showcasing Your Work Experience

The work experience section is the cornerstone of your resume as an azure data engineer. This area typically contains the bulk of your content, and effective resume templates prominently feature this section.

This part should be structured in reverse-chronological order, listing your previous positions. Use bullet points to succinctly showcase your achievements and the impact you made in each role.

Now, let's look at a couple of examples that will illustrate best practices for presenting your work history as an azure data engineer. These examples will highlight what effectively captures attention and what pitfalls to avoid:

Azure Data Engineer

Tech Solutions Inc. – Seattle, WA

- Managed databases and data pipelines

- Collaborated with team members on projects

- Monitored system performance and made adjustments

- Assisted in troubleshooting issues as they arose

- No specifics on the technologies or tools used

- Bullet points lack details about individual contributions or successes

- Emphasis on routine tasks instead of compelling achievements

Azure Data Engineer

Tech Solutions Inc. – Austin, TX

March 2020 - Present

- Design and implement scalable data pipelines using Azure Data Factory, resulting in a 30% reduction in data processing time

- Collaborate with cross-functional teams to optimize data architecture, improving data accessibility for analytics by 40%

- Lead training sessions for junior engineers on best practices in Azure technologies, improving team competency and productivity

- Uses powerful action verbs to clearly communicate achievements and responsibilities

- Incorporates quantifiable outcomes that demonstrate the job seeker's impact on the organization

- Highlights relevant technical skills essential for an Azure Data Engineer role

While your resume summary and work experience are important, don’t overlook the importance of other sections that contribute to a strong application. For detailed guidance on how to present all parts of your resume effectively, refer to our comprehensive guide on how to write a resume.

Top Skills to Include on Your Resume

A skills section is essential for your resume as it showcases your qualifications at a glance. This helps potential employers quickly assess if you’re the right fit for the Azure Data Engineer role.

e sure to include a mix of hard and soft skills to make your resume stronger. Highlight technical abilities with Azure SQL Database, Azure Data Factory, and data modeling techniques, along with analytical and communication skills that help you manage data processes and collaborate effectively across teams.

Interpersonal qualities like problem-solving, teamwork, and effective communication are soft skills that foster collaboration among team members and ensure successful project outcomes.

Selecting the right resume skills is important for aligning with what employers expect from applicants. Many organizations use automated systems to filter out job seekers who lack essential qualifications, making it important to showcase relevant skills.

To improve your chances of being noticed, carefully review job postings for insights into the specific skills that recruiters seek. Highlighting these prioritized abilities can help ensure your resume resonates well with both hiring managers and ATS software.

Pro Tip

10 skills that appear on successful azure data engineer resumes

Improve your resume to appeal to employers by highlighting key skills relevant to Azure Data Engineer positions. These essential skills not only attract recruiters but also demonstrate your expertise. You can find them illustrated in our resume examples, helping you apply with assurance.

Here are 10 skills you should consider including in your resume if they align with your experience and job expectations:

Data modeling

ETL processes

SQL skill

Cloud computing expertise

Big data technologies

Data warehousing

Data analysis

Machine learning basics

Problem-solving abilities

Collaboration and teamwork

Based on analysis of 5,000+ data systems administration professional resumes from 2023-2024

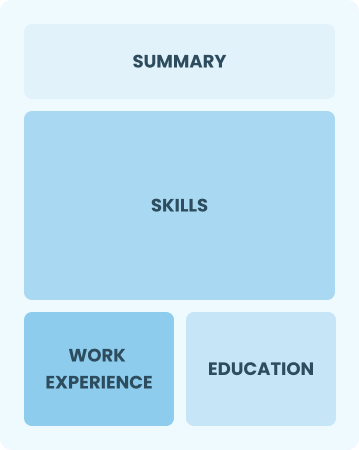

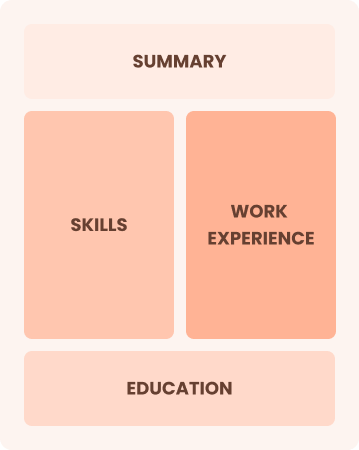

Resume Format Examples

Selecting the appropriate resume format is important for an Azure Data Engineer because it highlights your technical expertise, relevant projects, and career advancement in a clear and organized way.

Functional

Focuses on skills rather than previous jobs

Best for:

Recent graduates and career changers with up to two years of experience

Combination

Balances skills and work history equally

Best for:

Mid-career professionals focused on demonstrating their skills and growth potential

Chronological

Emphasizes work history in reverse order

Best for:

Seasoned experts driving data solutions and team leadership

Frequently Asked Questions

Should I include a cover letter with my Azure data engineer resume?

Absolutely! Including a cover letter is a great way to highlight your skills and passion for the Azure data engineer role. It allows you to connect your experience directly to the job requirements, making your application more compelling. For tips on crafting the perfect cover letter, explore our resources on how to write a cover letter. You can also use our Cover Letter Generator to make the process quick and easy.

Can I use a resume if I’m applying internationally, or do I need a CV?

For international job applications, use a CV when the employer requests it or if applying in regions where CVs are standard, like Europe. Explore our resources for CV examples and guidance on how to write a CV that aligns with international expectations.

What soft skills are important for Azure data engineers?

Soft skills such as communication, problem-solving, and collaboration are essential for Azure data engineers. These interpersonal skills foster effective teamwork and facilitate clear discussions with stakeholders, ensuring that data solutions meet business needs and improve overall project success.

I’m transitioning from another field. How should I highlight my experience?

Highlight transferable skills like analytical thinking, project management, and communication from your previous roles. These competencies illustrate your ability to adapt and add value even if you lack direct Azure Data Engineer experience. Use concrete examples to link your past successes to relevant responsibilities in data engineering, showcasing how you can make a difference.

Should I include a personal mission statement on my Azure data engineer resume?

Including a personal mission statement on your resume is recommended. This element highlights your values and career goals, making it particularly effective for companies that prioritize innovation and collaboration in data-driven environments, such as tech firms or organizations focused on digital transformation.

How do I add my resume to LinkedIn?

To increase your resume's visibility on LinkedIn, you should add your resume to LinkedIn directly or highlight key skills and projects in the "About" and "Experience" sections. This approach helps recruiters easily identify Azure data engineers with the right expertise, making you a more appealing applicant in the job market.