Hard skills encompass programming in ABAP, debugging code, and developing custom reports, all of which are important for an ABAP developer to create effective software solutions.

Popular ABAP Developer Resume Examples

Check out our top ABAP developer resume examples that showcase key skills such as programming expertise, problem-solving abilities, and project management experience. These examples will help you effectively highlight your strengths to potential employers.

Ready to build your ideal resume? Our Resume Builder offers user-friendly templates specifically designed for tech professionals, making it simple to create a standout application.

Recommended

Entry-level ABAP developer resume

This entry-level resume for an ABAP Developer highlights the applicant's technical skills and accomplishments in optimizing ERP workflows and developing SAP modules. New professionals in this field should demonstrate their problem-solving abilities, project contributions, and relevant certifications to make a compelling case for their potential despite limited direct experience.

Mid-career ABAP developer resume

This resume effectively showcases the applicant's extensive ABAP development experience and technical skills, indicating their readiness for more complex projects and leadership roles. The demonstrated ability to improve system efficiency and collaborate across teams highlights a strong career trajectory in SAP solutions.

Experienced ABAP developer resume

The work history section highlights the applicant's extensive experience as an ABAP Developer, showcasing impressive achievements such as a 30% increase in efficiency from an SAP module upgrade. The clear bullet points improve readability, making it easy for potential employers to quickly grasp key accomplishments.

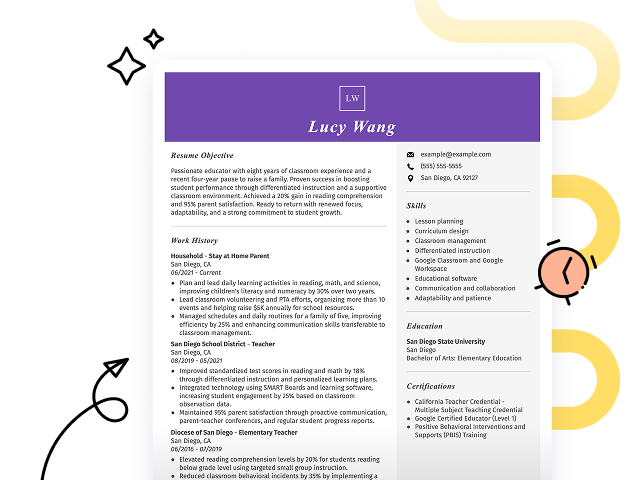

Resume Template—Easy to Copy & Paste

Emily Wilson

Riverside, CA 92504

(555)555-5555

Emily.Wilson@example.com

Skills

- ABAP Programming

- SAP Debugging

- Module Integration

- BAPI Development

- End-User Training

- ERP Analytics

- Custom Code Optimization

- Problem Solving

Certifications

- Certified ABAP Developer - SAP America

- Professional Agile Leader - Scrum Alliance

- SAP Certified Development Associate - SAP SE

Languages

- Spanish - Beginner (A1)

- French - Beginner (A1)

- Mandarin - Beginner (A1)

Professional Summary

Dynamic ABAP Developer skilled in enhancing processing efficiency, module integration, and backend optimization within SAP environments. Expert in creating custom solutions that improve system performance and save costs for enterprises.

Work History

ABAP Developer

Enterprise Solutions Ltd. - Riverside, CA

May 2023 - December 2025

- Reduced processing time by 40%

- Developed 12+ BAPIs for client systems

- Optimized backend efficiency by 15%

SAP Systems Analyst

Innovate Tech Systems - Lakeside, CA

July 2020 - April 2023

- Led SAP upgrade, boosting speed by 25%

- Implemented new modules, saving 150K annually

- Developed security protocols increasing safety by 30%

ERP Software Specialist

NexCode Technologies - San Diego, CA

January 2019 - June 2020

- Saved 200K by optimizing software usage

- Delivered 20+ enhancements in SAP projects

- Trained team, enhancing productivity by 35%

Education

Master of Science Computer Science

Stanford University Stanford, California

May 2018

Bachelor of Science Information Technology

University of Washington Seattle, Washington

May 2016

How to Write an ABAP Developer Resume Summary

Your resume summary is the first thing employers will see, making it important for creating a positive impression. As an ABAP developer, you should emphasize your programming skills and experience with SAP systems to stand out in this competitive field. To illustrate effective strategies for crafting your summary, we’ll provide some examples that highlight what works well and what pitfalls to avoid:

I am an experienced ABAP developer with a solid background in programming and some project experience. I hope to find a position that allows me to use my skills effectively and grow professionally. A good work environment where I can learn more is important to me. I believe I would be a great addition if given the chance.

- Lacks specific examples of technical skills or projects, making it too generic

- Focuses on the applicant's expectations instead of highlighting what they can contribute to the employer

- Uses vague language like 'some project experience' which does not demonstrate actual achievements or expertise

Proficient ABAP developer with over 6 years of experience in designing, developing, and maintaining SAP applications. Increased system performance by 30% through optimized coding practices and enhancements in existing programs. Expertise in data migration, report generation, and integration with various SAP modules including SD and MM.

- Starts with specific experience level and area of expertise in ABAP development

- Incorporates quantifiable achievement demonstrating a measurable improvement in system performance

- Highlights relevant technical skills and competencies that are critical for an ABAP developer role

Pro Tip

Showcasing Your Work Experience

The work experience section is an important part of your resume as an ABAP developer, where you'll showcase the bulk of your content. Good resume templates always prioritize this section to highlight your professional journey.

This section should be organized in reverse-chronological order, detailing your previous roles. By using bullet points, you can succinctly describe your achievements and contributions in each position, helping hiring managers quickly understand your impact.

To further illustrate how to create effective work history entries for ABAP developers, we’ll present a couple of examples that demonstrate what works well and what might not resonate.

ABAP Developer

Tech Solutions Inc. – Austin, TX

- Wrote code for programs.

- Fixed bugs in existing applications.

- Collaborated with team members.

- Participated in meetings to discuss projects.

- Lacks specific employment dates

- Bullet points are overly vague and fail to highlight unique skills

- Emphasizes everyday tasks rather than showcasing successful projects or contributions

ABAP Developer

Tech Solutions Inc. – San Francisco, CA

March 2020 - Current

- Developed and optimized ABAP programs for SAP modules, improving system performance by 30%.

- Led a team in migrating legacy systems to SAP HANA, reducing data processing time by 40%.

- Implemented automated testing procedures, decreasing bug resolution time by 25% and improving software reliability.

- Starts with powerful action verbs that clearly convey the developer's contributions

- Incorporates specific performance metrics that highlight tangible improvements

- Aligns accomplishments with essential skills for ABAP development and leadership

While your resume summary and work experience are important, don't overlook other sections that also need careful attention. Each part of your resume contributes to showcasing your qualifications. For more detailed guidance, be sure to read our comprehensive guide on how to write a resume.

Top Skills to Include on Your Resume

Including a skills section on your resume is important for effectively showcasing your qualifications. It enables job seekers to highlight their abilities while providing employers with a quick reference point for assessing suitability.

This section allows hiring managers to swiftly identify job seekers who meet essential criteria, facilitating a more efficient selection process. ABAP developer professionals should emphasize both technical and interpersonal skills, which will be discussed in detail below.

Soft skills such as teamwork, problem-solving, and communication are essential for ABAP developers. These skills foster collaboration and ensure successful project outcomes.

When selecting resume skills for your resume, it's essential to align them with what employers expect from an ideal job seeker. Many companies use automated systems that filter out resumes lacking the necessary skills for the position.

To effectively highlight your qualifications, review job postings closely. This will help you identify key skills that recruiters and ATS systems prioritize, ensuring your resume stands out in the hiring process.

Pro Tip

10 skills that appear on successful ABAP developer resumes

Improve your resume to attract recruiters by highlighting the essential skills sought after in ABAP developer roles. These skills are often showcased in our resume examples, giving you a competitive edge when applying for positions.

By the way, consider incorporating relevant skills from the list below that align with your experience and job requirements:

ABAP Programming

SAP HANA

Data Dictionary Management

Debugging Skills

Performance Optimization

Modularization Techniques

Object-Oriented Programming (OOP)

Integration with SAP Modules

Technical Documentation

Collaboration in Agile Environments

Based on analysis of 5,000+ web development professional resumes from 2023-2024

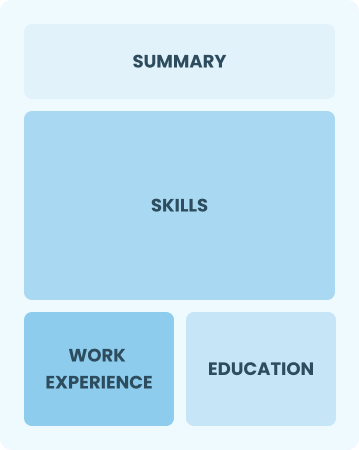

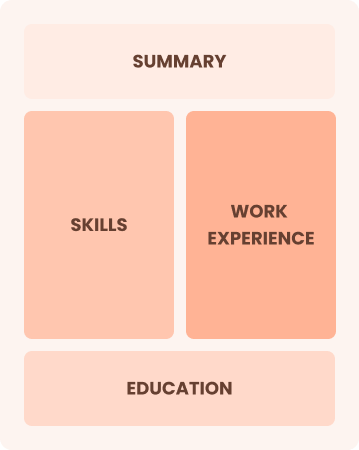

Resume Format Examples

Choosing the right resume format is important for an ABAP developer, as it effectively showcases your programming skills, relevant projects, and career growth to potential employers.

Functional

Focuses on skills rather than previous jobs

Best for:

Recent graduates and career changers with up to two years of experience

Combination

Balances skills and work history equally

Best for:

Mid-career developers eager to highlight their skills and pursue growth opportunities

Chronological

Emphasizes work history in reverse order

Best for:

Seasoned developers leading innovative ABAP solutions in large enterprises

Frequently Asked Questions

Should I include a cover letter with my ABAP developer resume?

Absolutely, including a cover letter is essential for showcasing your unique skills and passion for the ABAP developer role. It gives you the chance to explain your experience in depth and connect with potential employers. For help crafting a compelling cover letter, consider using our Cover Letter Generator or refer to our comprehensive guide on how to write a cover letter.

Can I use a resume if I’m applying internationally, or do I need a CV?

When applying for jobs outside the U.S., a CV is often required instead of a resume. A CV provides a comprehensive overview of your career, education, and accomplishments. For guidance on how to write a CV and creating an effective CV, explore our resources that offer templates and tips tailored to international standards. Additionally, reviewing CV examples can further help you in understanding the structure and content expected in different regions.

What soft skills are important for ABAP developers?

Soft skills like problem-solving, collaboration, and adaptability are essential for ABAP developers. These abilities facilitate effective communication with team members and clients, improving interpersonal skills and fostering a positive working environment.

I’m transitioning from another field. How should I highlight my experience?

Highlight your transferable skills such as analytical thinking, teamwork, and adaptability when applying for ABAP developer positions. Even if you lack direct experience in programming, relate past achievements to problem-solving tasks and project management. Specific examples can illustrate how you can bring value to development projects and contribute effectively right from the start.

How should I format a cover letter for an ABAP developer job?

To format a cover letter, start by including your name and contact details. This should be followed by a formal greeting, an engaging introduction that highlights your interest in the ABAP developer role, and a concise summary of your relevant skills. Always customize the content to align with the job requirements and finish with a strong call to action inviting further discussion.

How do I add my resume to LinkedIn?

To improve your professional visibility, make sure to add your resume to LinkedIn. You can either upload it directly or summarize key achievements in the "About" and "Experience" sections. This strategy helps potential employers find qualified ABAP developers like you, increasing your chances of connecting with the right opportunities.